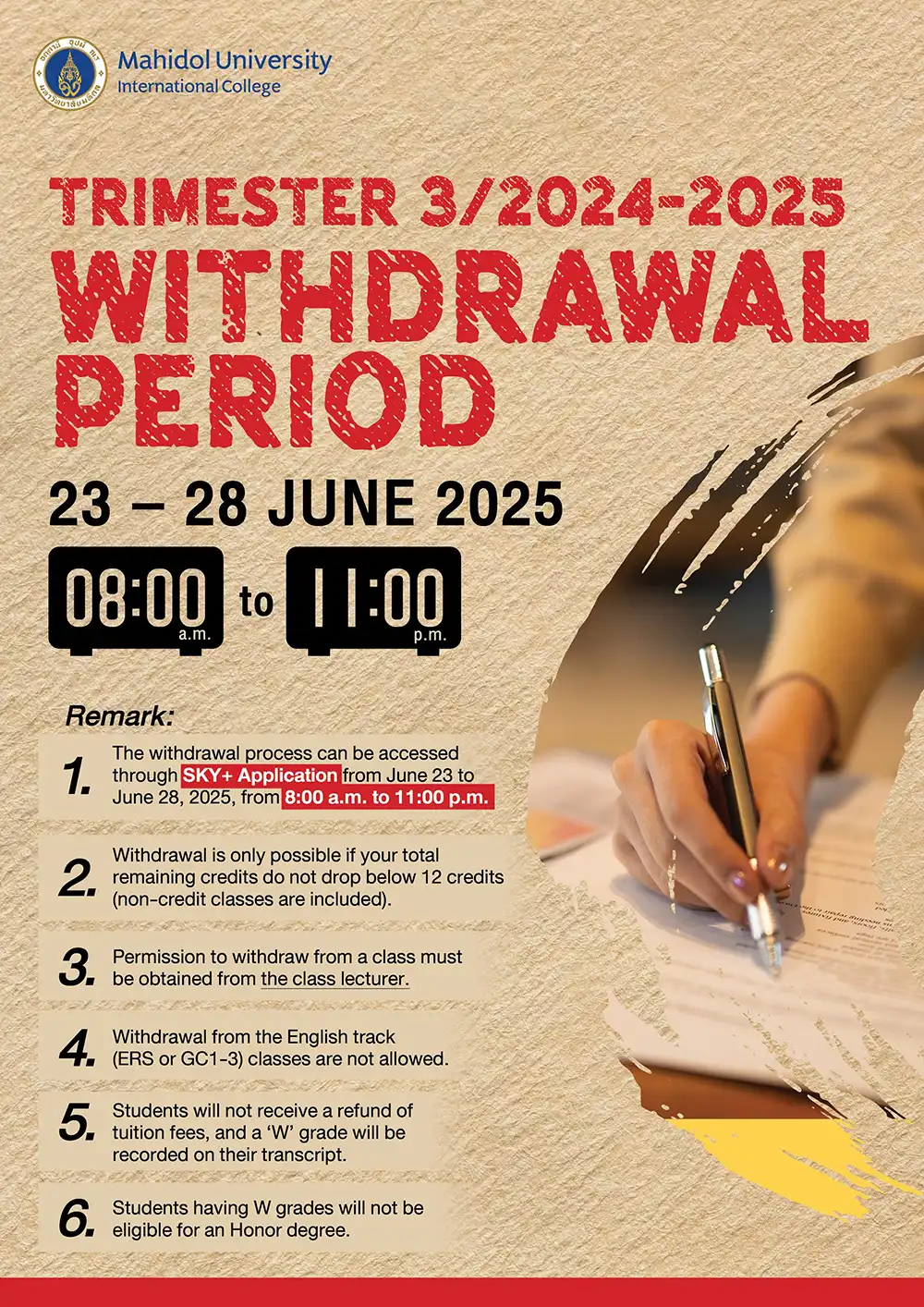

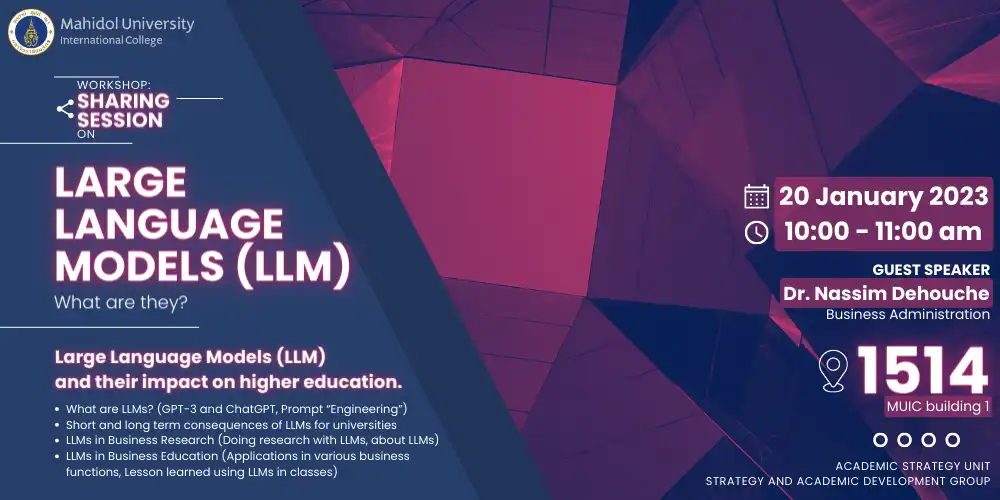

Large Language Models (LLM)-What are they?

January 20, 2023 2023-05-08 7:55Large Language Models (LLM)-What are they?

Title: Large Language Models (LLM)-What are they?

Date & Time: Friday, 20 January 2023 from 10:00 – 11:00 a.m.

Venue: Computer Lab 1514, MUIC Building 1

Conductor: Dr. Nassim Dehouche

The Strategy and Academic Development Section and the Business Administration Division organized the workshop entitled ‘Large Language Models (LLM)-What are they?’, aiming at introducing the participants to the use of OpenAi – GPT for producing academic works, including being aware of its short- and long-term consequences for both students and universities, and experiencing how the system is used in the research and classroom settings.

As the world is changing faster, Artificial Intelligence (AI) gradually becomes part of every aspect of our life. New technologies and tools are designed to promptly work as commands, transfer laborious work from man to machine, and learn human behaviors to predict what we might want and make our life easier and more connected than ever before. There has been a revolution in the way the machine learns, with the rapid growth of digitized data, resulting in the ability of the machine to achieve the tasks faster and manipulate languages. One of the AIs that has been affected the most from this advancement is chatbot. It was formerly used as a tool that can automate tasks performed regularly and at the specific time, to help lift the burdens of employees and refocus their attentions to other important tasks, and provide instant responses. With the advancement of Machine Learning and Natural Language Processing (NLP), chatbot is developed to be more than a conversational AI text generator, but rather an automated-powerful content creator that possesses the ability to take the conversation to an unprecedented level. Some of the well-known sites that adopted the technology include Tome, Lexica, Elicit, YouChat, Chatsonic, DALL·E, and the most fine-tuned AI text generator: Chat GPT.

Early version of GPT was based on the Transformer model, a deep learning that uses artificial neural networks to mimic the learning process of the human brain by adopting self-attention techniques to weight the significance of the input data and diminish the irrelevant others. This technique is primarily used in the fields of NLP, a subfield of AI combining computational linguistics, machine learning, and deep learning models to help machines process and understand human language, in order to automatically perform repetitive tasks. The NLP system has been gaining popularity with Large Language Models (LLMs) that currently apply across various types of NLP applications: chatbots, spam detection, machine translation, text generation and speech recognition mechanism (Amazon Alexa, Google API, etc.), which is commonly seen in various forms of gadgets.

Chat GPT is currently one of the most hype AI in the industry. Its uniqueness lies in its ability to generate original, high-quality, coherent human-like text that is achieved through the use of the network that has been fed up with hundreds of gigabytes of text data. Chat GPT is trained based on the Generative Pre-trained Transformer (GPT) architecture where it is taught to understand the patterns and structure of natural language while being aware of the context and dynamics of the conversation. With the support of the integration of Reinforcement Learning with Human Feedback (RLHF) or human feedback into the system, the current version of GPT has been improved to produce natural-sounding text or some might even say that it’s indistinguishable from human-written text, and that’s where the problem arises. While feeding the AI with text, paragraph, data, description, etc. The machine learns the pattern and structure of what to answer and how to sound like a human. As a result, the difference between machine-authored and human-authored writing has become less noticeable, and the errors in machine text generations are getting subtle and harder to spot. Thus, it poses a number of challenges to education communities, and overall industries.

Firstly, it puts pressure on universities and lecturers to work more to detect the use of AI in assignments and create more creative questions that require more analysis and comparison, to avoid plagiarism with technology like Chat GPT. As for the research community, since GPT runs with the massive data-driven resources and advanced language capabilities, it can produce the results that are hard or even impossible to validate or reproduce with the traditional research approaches.

GPT 3 sparks concern over academic integrity where the students use Chat GPT to do assignments. There is also a concern that the need for university graduates in certain fields may reduce as the machine can work better, faster, and cheaper. In addition, it may disrupt the traditional classroom and also the way information is delivered, shared, and consumed. The GPT hype among students is making the schools worldwide reconsider their academic policy and strategies. Thus, the AI encourages schools to come up with new syllabus, more creativity in terms of teaching and learning approaches, while setting higher benchmarks in terms of the quality of the produced works.

Regardless Chat GPT is here to stay, as the number of registered users per day has been steadily rising since its launch years ago. Adopting full-fledged GPT in the classroom may raise the eyebrows of many academic members, but its advantages are still standing strong against those criticisms, especially in terms of data availability and accessibility. So, rather than seeing GPT as your enemy and being surprised and frightened by what was possible, we need to train the educators to be ready for the coming of AI in education, and emphasize the importance of creating healthy relationships between the teachers and students as they navigate this new technology.